An intro to distributed AI

Home lab, distributed AI, co pilots, ML models, virtual agents — What a time to be a software developer! What a time to invest in or launch a startup, am I right?

The CEO of OpenAI’s rallying cry for investors to raise a staggering $7 trillion dollars (seriously?!) reflects some of the transformations underway. Nvidia becoming the dominant force in chip manufacturing and GPUs raises critical questions about the landscape of competition, innovation, and privacy.

Reflecting on the last year it’s clear that we’ve witnessed an extraordinary period in the tech industry. AI’s rapid development and adoption is incredible. The impact for web hosting and software development as a whole are vast. Touching nearly every aspect of what we do. Even if you are just “tech-adjacent” like finance or media, your bottom line will be impacted. Whether you see AI as a plague, a tool, an opportunity, or a potential money maker there’s a lot to talk about.

For companies like Opalstack and the wider community of developers, privacy advocates, and tinkerers the concept of distributed AI workloads becomes extremely relevant. Similar to the pioneering spirit of the early Bitcoin mining era there’s a burgeoning sense of creativity and innovation in the developer community.

In this article we’ll cover the current state of the art with code examples and two open source projects AI Ranch and EmojiTarot that we’ve built and released into the public domain to empower you to participate in this emerging sector.

New players in an old game

There are some new players in the arena and that means new places to find information. The “GitHub” of machine learning is HuggingFace. Download an ML model, use it to build your next application, and don’t worry about training the model because some of the largest and most powerful tech companies have already done it. ML models on their own are just blobs of binary data, it’s what you do with them that matters.

There’s also an ecosystem of JSON API enabled web servers that download the model from the public repos for your hardware, load the model into RAM, and give you full API access. The ecosystem of apps has been changing rapidly but one that seems to be gaining traction is Ollama. An MIT licensed projects with more than 30k stars on GitHub, a strong user base, and a promising future.

In simple terms this means all the pieces to build artificial intelligence chat bots, image generators, and voice synthesizers are all publicly available and ready to be hacked upon. They can run at your house on hardware you own. We are here to help you monetize them.

There are hundreds of models to choose from and each one has a different use case. Some of the other major models are llama.cpp, kobold,cpp, stability.ai, and mimic3. As a web hosting company we’re a bit concerned about the huge, demanding workloads for AI models.

Luckily Opalstack’s legacy fits right into the new ecosystem.

BYOB – distributed AI is your computer connected to the cloud.

Opalstack is a company with its heart in open source and maker culture. Each of the co-founders has had a long career in tech with deep experience in open source software. I, John the CXO, have always had a passion for electronics and Linux. In the last decade, I’ve worked from the website frontend all the way down the stack to etching my own PCBs.

Most of that hardware experience was working with Arduino and the ESP8266 and ESP32 hardware platforms. This path and the code that came with it lead to some of the earliest iterations of the Opalstack platform. Some of the projects I worked on sent data from small external devices to a central cloud hosted Django application. If we can connect small local devices to the cloud then we should be able to connect home lab hardware that can handle the high demand workloads of the new AI tools the same way, right?

Fueled by curiosity and the quick rise of AI tooling. I’ve spent the last few months figuring out all the possible ways of integrating tools like Ollama with Opalstack.

I’ve seen 3 general approaches to integrate locally (your own hardware) run AI apps on our platform. (If you want to run major hardware/GPUs in the cloud send us an email and we’ll help you get going.)

- Dynamic DNS

- SSH Tunnel + Proxy Server

- SSH Tunnel + Application Layer

Each of these options has its pros and cons and each one works on Opalstack.

Dynamic DNS

So let’s dive into the good stuff, the code. If you haven’t seen the the Opalstack Python library, you should definitely check it out i.e., read our post about it: import opalstack. It is going to be key in all of the code examples we have available for this. Not to brag but it’s super slick, async out of the box, and easy to integrate into a task queue like celery. Here is a script that enables Dynamic DNS on our platform:

# Opalstack API settings

api_token = '123456789' # Replace with your Opalstack API token

# Domain settings

domain_name = 'example.com' # Replace with your domain name

import requests

import opalstack

# Initialize Opalstack API

opal = opalstack.Api(token=api_token)

def get_wan_ip():

"""

Get the current WAN IP using a public API.

"""

response = requests.get('https://api.ipify.org?format=json')

return response.json()['ip']

def check_domain_in_list(domain_id, records_list):

"""

Check if the given domain ID exists in the list of records.

:param domain_id: The domain ID to search for.

:param records_list: The list of records, each a dictionary.

:return: True if the domain ID is found, False otherwise.

"""

for record in records_list:

if record['domain'] == domain_id:

return record

return False

def update_a_record(domain, ip_address):

"""

Update the A record for the specified domain with the provided IP address.

"""

domains = opal.domains.list_all()

# Enhanced domain matching logic

domain_id = None

for d in domains:

full_domain = d['name'].strip()

if full_domain == domain.strip():

domain_id = d['id']

break

if not domain_id:

print(f"Domain '{domain}' not found.")

return

records = opal.dnsrecords.list_all()

a_record = check_domain_in_list(domain_id, records)

if not a_record:

print(f"No A record found for '{domain}'. Creating one.")

opal.dnsrecords.create(domain_id, 'A', ip_address, 600)

print(f"A record created for IP {ip_address}")

else:

print(a_record['id'], ip_address)

opal.dnsrecords.update_one({'id':a_record['id'], 'type':'A', 'content':ip_address, 'ttl':600})

print(f"A record updated to IP {ip_address}")

# Main execution

current_ip = get_wan_ip()

update_a_record(domain_name, current_ip)

Our API and Python library were born for this moment. Beyond just being a standard, dynamic DNS solution there are many customizable options that enable you to integrate your home lab hardware into cloud solutions.

Pros: No connection timeouts.

Cons: Your IP address is exposed.

Presenting Emoji Tarot: SSH Tunnel + Proxy Server

Onto the next option. You can use a frontend web server to proxy an SSH tunnel to an SSL endpoint. On our platform the App/Site routing features make setting this up a breeze. This has some major improvements over direct DNS routing in terms of security and transparency. If you want open access to the LLM without an API token or session authenticated permissions this is a good option. We used this option for a code example with Emojitarot (you can see a live example at emojitarot.xyz). Don’t forget to set the origin’s environment var in the systemd service if you are going to do routing with the proxy frontend.

Environment="OLLAMA_ORIGINS=*"

Pros: Your IP address is not exposed

Cons: A 60 second timeout means your LLM better be tuned for speed.

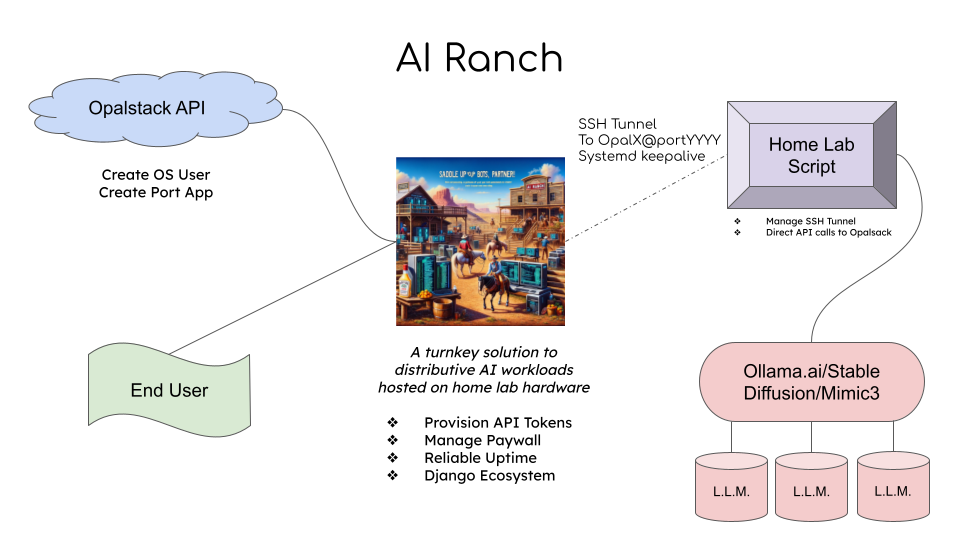

Presenting AI Ranch: SSH Tunnel + Application Layer

Finally, you can use an SSH tunnel combined with a full application layer. The real flex. You know, the good stuff?

This option is so flexible that you can build a full application with custom business logic, a custom model, and any other bells and whistles. This needs a bit more explanation and a full application demo to really show off. So you can start at the AI Ranch cowpoke (or llama, I reckon)!

AI Ranch is an out of the box solution that provides everything you need to build a distributed AI application that connects your home lab to the cloud. It will help you wrangle paywalled/templated access to a herd of Ollama (or other model) endpoints. It’s built with Django, Celery, and Opalstack.

Of course, I suggest you just go read the code, signup for a 14 day free trial, deploy it, and try it out. You will need a local computer capable of running a model. If you have an old gaming PC/laptop then it should be perfect for this.

But here is a birds eye view of the project,

Pros: You have the most control and you can add a paywall to your model.

Cons: It’s extra work to set up and manage.

If you are playing with some of these AI tools locally I hope this has given you some inspiration. Maybe you have an idea for an app or you suddenly found a use for that 10 year old gaming computer you’ve been keeping around. Whatever your inspiration may be, give AI Ranch a try and have fun building your own distributed AI!

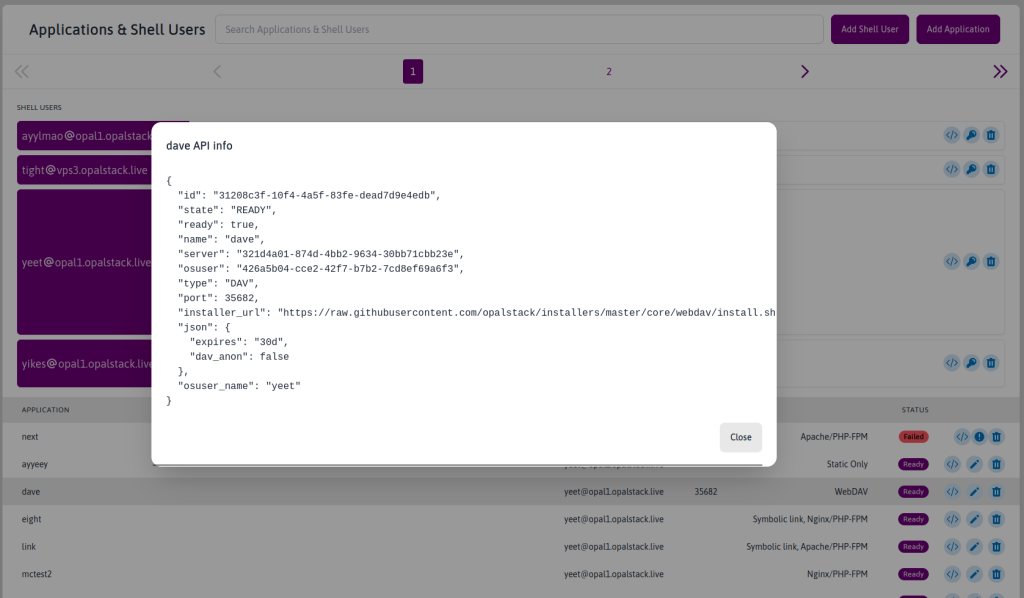

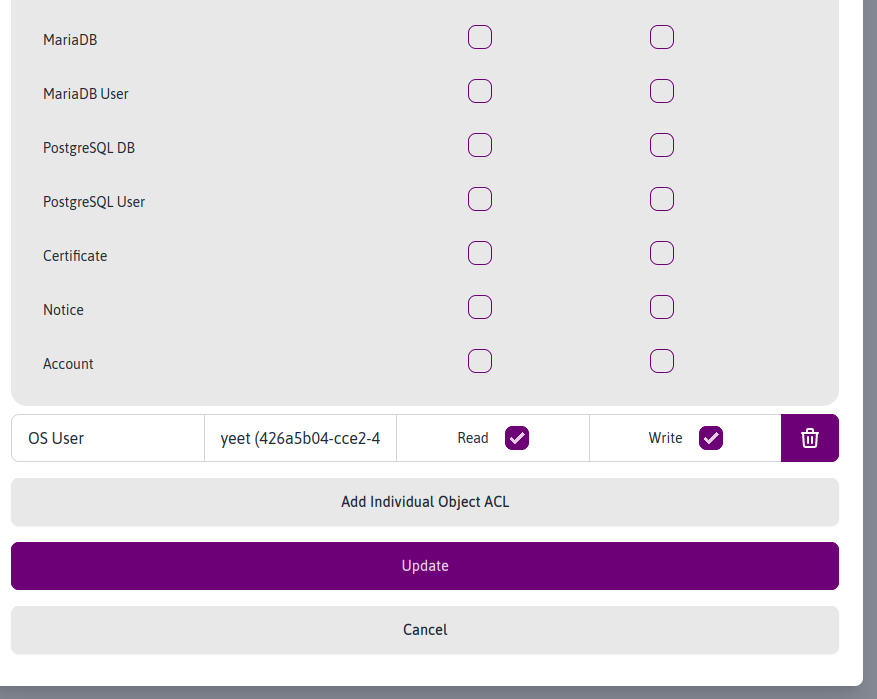

API Update

Just one more thing…here’s a sneak peek of a major upgrade we’re building to allow granular token/API permissions. This will help you allow/restrict access to resources from different API tokens. These permissions combined with our API and Python library will allow you to build better, more secure applications on Opalstack.

Some sample screenshots below, because who doesn’t love a teaser photo.